|

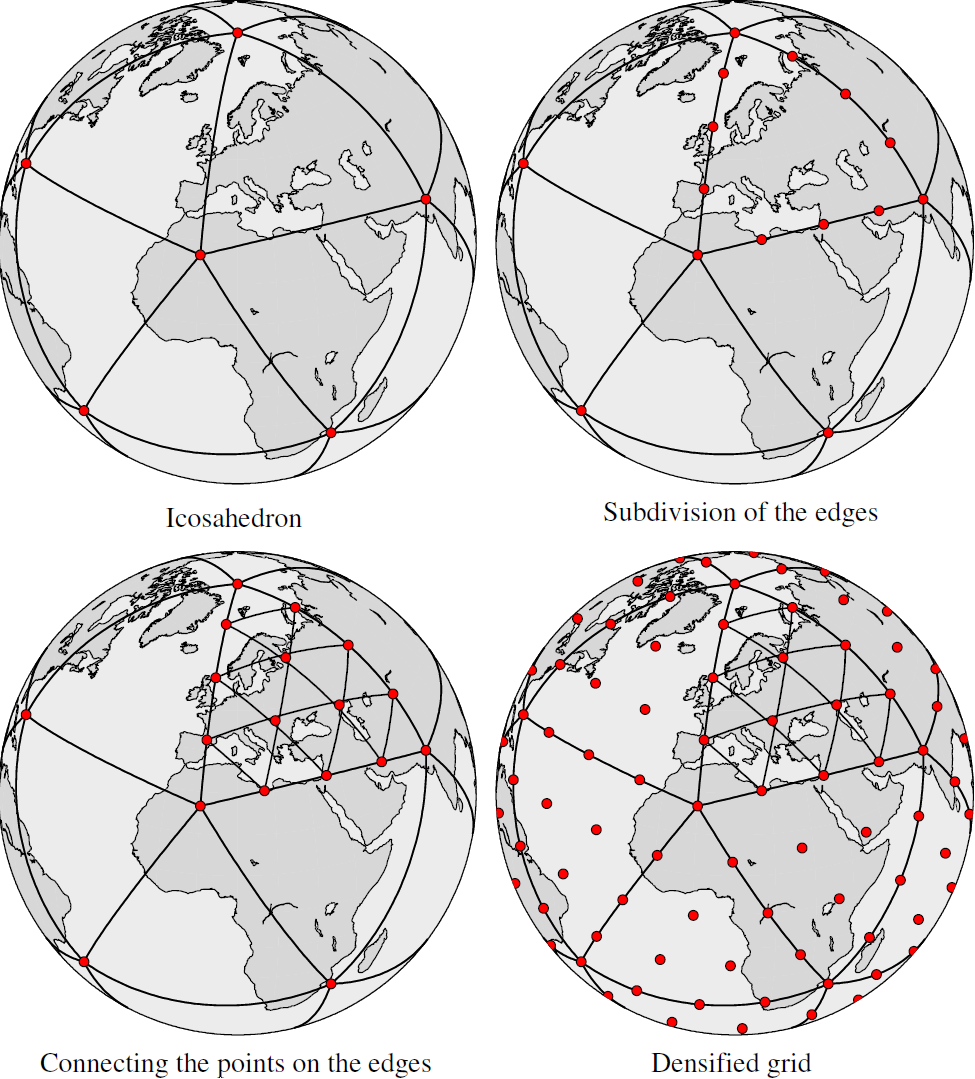

We validate our approach on an extensive range of PDEs with training data from voxel grids, meshes, and point clouds. We represent this reduced manifold using continuously differentiable neural fields, which may train on any and all available numerical solutions of the continuous system, even when they are obtained using diverse methods or discretizations. Whereas prior ROM approaches reduce the dimensionality of discretized vector fields, our continuous reduced-order modeling (CROM) approach builds a low-dimensional embedding of the continuous vector fields themselves, not their discretization. We propose to accelerate PDE solvers using reduced-order modeling (ROM). The long runtime of high-fidelity partial differential equation (PDE) solvers makes them unsuitable for time-critical applications. TL DR: We accelerate PDE solvers via rapid latent space traversal of continuous vector fields leveraging implicit neural representations. Keywords: PDE, implicit neural representation, neural field, latent space traversal, reduced-order modeling, numerical methods Peter Yichen Chen Columbia University, Jinxu Xiang Columbia University, Dong Heon Cho Columbia University, Yue Chang University of Toronto, G A Pershing Columbia University, Henrique Teles Maia Columbia University, Maurizio M Chiaramonte Meta Reality Labs Research, Kevin Thomas Carlberg Meta Reality Labs Research, Eitan Grinspun University of Toronto We show improvements in accuracy on ImageNet across distribution shifts demonstrate the ability to adapt VLMs to recognize concepts unseen during training and illustrate how descriptors can be edited to effectively mitigate bias compared to the baseline.ĬROM: Continuous Reduced-Order Modeling of PDEs Using Implicit Neural Representations Extensive experiments show our framework has numerous advantages past interpretability.

We query large language models (e.g., GPT-3) for these descriptors to obtain them in a scalable way. In the process, we can get a clear idea of what the model “thinks” it is seeing to make its decision it gains some level of inherent explainability. By basing decisions on these descriptors, we can provide additional cues that encourage using the features we want to be used. We ask VLMs to check for descriptive features rather than broad categories: to find a tiger, look for its stripes its claws and more.

We present an alternative framework for classification with VLMs, which we call classification by description. The procedure gives no intermediate understanding of why a category is chosen and furthermore provides no mechanism for adjusting the criteria used towards this decision. By only using the category name, they neglect to make use of the rich context of additional information that language affords.

Vision-language models such as CLIP have shown promising performance on a variety of recognition tasks using the standard zero-shot classification procedure - computing similarity between the query image and the embedded words for each category. TL DR: We enhance zero-shot recognition with vision-language models by comparing to category descriptors from GPT-3, enabling better performance in an interpretable setting that also allows for the incorporation of new concepts and bias mitigation. Keywords: vision-language models, CLIP, prompting, GPT-3, large language models, zero-shot recognition, multimodal Sachit Menon Columbia University, Carl Vondrick Columbia University Visual Classification via Description from Large Language Models ICLR is the premier conference on deep learning where researchers gather to discuss their work in the fields of artificial intelligence, statistics, and data science. Research papers from the department were accepted to the 11th International Conference on Learning Representations (ICLR 2023).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed